Gian.cool Gianfranco's blog

-

Small coding models on Terminal-Bench 2

Read more →Updated on: April 23rd 2026

Original date: Feb 26th 2026Frontier models get most of the headlines, but the more interesting race is happening one tier down. Here’s how open-weight and smaller models stack up on Terminal-Bench 2.0.

Benchmark ComparisonSmall Coding Models

Terminal-Bench 2.0Source: Terminal-Bench 2.0 leaderboard. All Qwen3.5 MoE models use activated parameter counts (A-suffix). K2.5-1T-A32B is a 1T-parameter sparse MoE from Moonshot AI with 32B active parameters.

The top of the chart is no longer just a Qwen3.5 story. Qwen3.6-27B now leads this group at 59.3%, outperforming even the much larger Qwen3.5-397B-A17B at 52.5%. Qwen3.6-35B-A3B also lands in the top tier at 51.5%, suggesting Alibaba’s newer generation is pushing small-model coding performance meaningfully higher.

Just below that, the older leaders still hold up well. K2.5-1T-A32B scores 50.8%, Qwen3.5-122B-A10B reaches 49.4%, and Gemma4-31B comes in at 42.9%. In the same general size class, Qwen3.5-27B posts 41.6%, Qwen3.5-35B-A3B scores 40.5%, and Gemma4-26BA4B trails at 34.2%.

GPT-OSS-120B at 18.7% remains the clearest outlier. Even at a much larger footprint than the 27B–35B class, it underperforms models that are dramatically smaller on disk. Once you add model size as a second dimension, the efficiency story becomes much more interesting than the raw leaderboard alone.

Model Score Provider GGUF Size Qwen3.6-27B 59.3% Alibaba unsloth/Qwen3.6-27B-GGUF16.8 GB Qwen3.5-397B-A17B 52.5% Alibaba unsloth/Qwen3.5-397B-A17B-GGUF244 GB Qwen3.6-35B-A3B 51.5% Alibaba unsloth/Qwen3.6-35B-A3B-GGUF22.1 GB K2.5-1T-A32B 50.8% Moonshot unsloth/Kimi-K2.5-GGUF621 GB Qwen3.5-122B-A10B 49.4% Alibaba unsloth/Qwen3.5-122B-A10B-GGUF76.5 GB Gemma4-31B 42.9% Google unsloth/gemma-4-31B-it-GGUF18.3 GB Qwen3.5-27B 41.6% Alibaba unsloth/Qwen3.5-27B-GGUF16.7 GB Qwen3.5-35B-A3B 40.5% Alibaba unsloth/Qwen3.5-35B-A3B-GGUF22 GB Gemma4-26BA4B 34.2% Google unsloth/gemma-4-26B-A4B-it-GGUF16.9 GB GPT-OSS-120B 18.7% OpenAI unsloth/gpt-oss-120b-GGUF62.8 GB Intelligence vs SizeTerminal-Bench Score vs Model Size

Q4_K_M GGUF size in GBNote: Sizes are GGUF download sizes for the same quantization level, Q4_K_M. This chart compares storage footprint against Terminal-Bench 2.0 score, making the efficiency tradeoff more visible than the leaderboard alone.

Using the same

Q4_K_Mquantization across the board makes the size comparison much cleaner. The scatter plot shows the main takeaway immediately: Qwen3.6-27B sits in the best part of the frontier here, delivering the highest score while staying under 17 GB. Gemma4-31B and Qwen3.5-27B also look strong on a score-per-GB basis, while the giant MoE checkpoints buy you some extra capability at a very steep storage cost. -

Opus 4.6 vs GPT Codex 5.3 vs GPT 5.4

Read more →Updated to include GPT-5.4 and Gemini 3.1 Pro

A comparison of benchmark metrics between Opus 4.6 and Codex 5.3 models.

Anthropic and OpenAI both recently published Terminal-Bench 2.0 results, but in separate charts and a table. I wanted the full picture, so I combined them.

Benchmark ComparisonAgentic Coding

Terminal-Bench 2.0Note: All OpenAI models shown at xhigh compute setting. GPT-5.2-Codex appears twice — 64.7% as reported by Anthropic, 64.0% as reported by OpenAI. Harnesses differ: Anthropic & Google used the Terminus-2 harness; OpenAI used Codex. Scores are not directly comparable across providers.

-

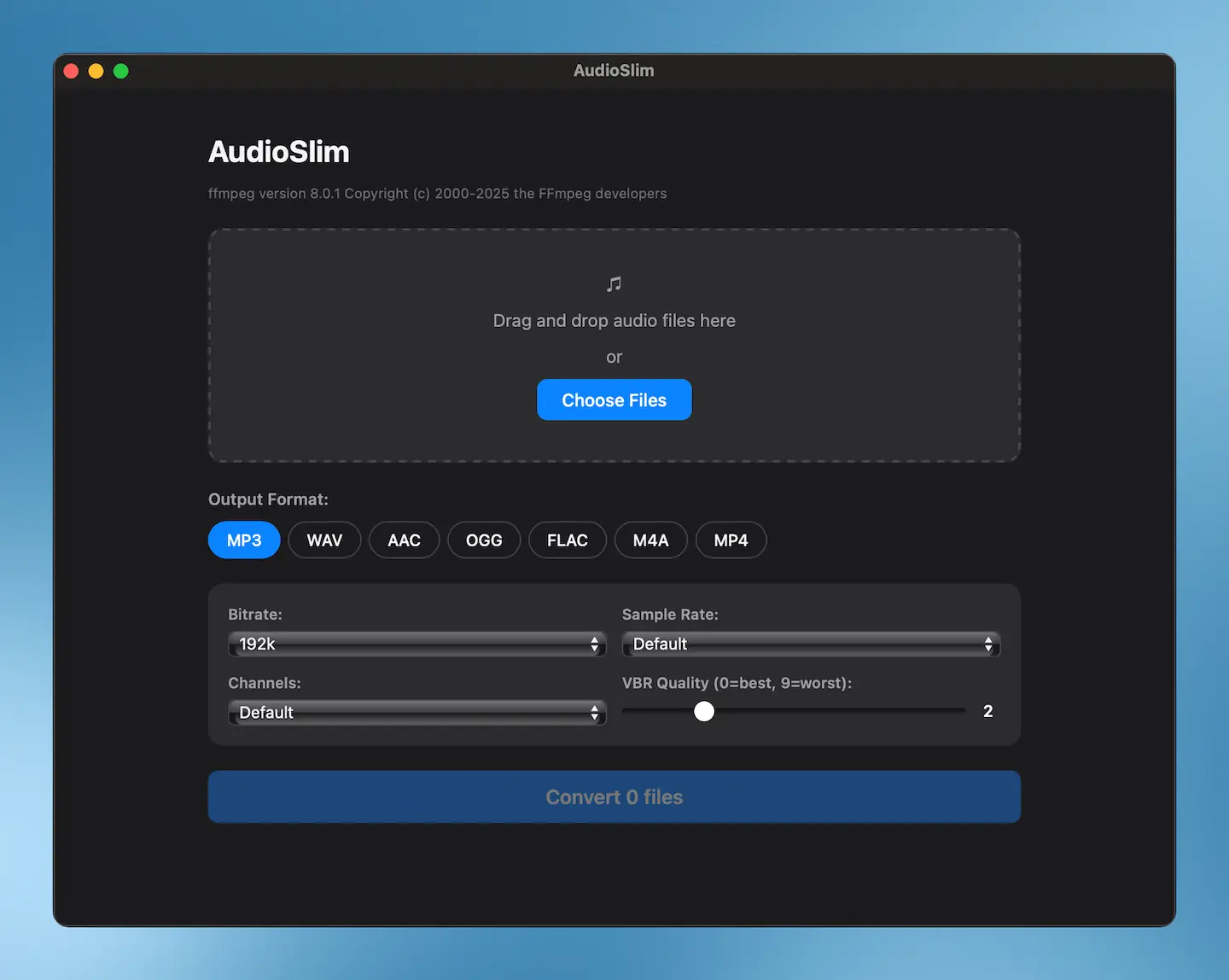

I Built a Desktop Audio Converter With Claude Code

Read more →I’ve been meaning to build this app, and I actually started around this time last year. But after learning how to “code” or build with AI coding agents like

claudecode, I just gave it this prompt:plan how to complete this app. it should allow one or multiple files to be selected or dragged (audio only) and then it should show a box to select which format to convert to e.g mp3, wav, aac,ogg, flac,m4a, mp4) plus certain options that come from ffmpeg to compress the file

It wrote this comprehensive plan. And the it went for it. I asked a small question to fix a small UI color issue. And voila!

A(I) built Audioslim, a native macOS app that converts audio between MP3, WAV, AAC, OGG, FLAC, M4A, and MP4. I built it with claude code, Anthropic’s AI coding assistant for the terminal.